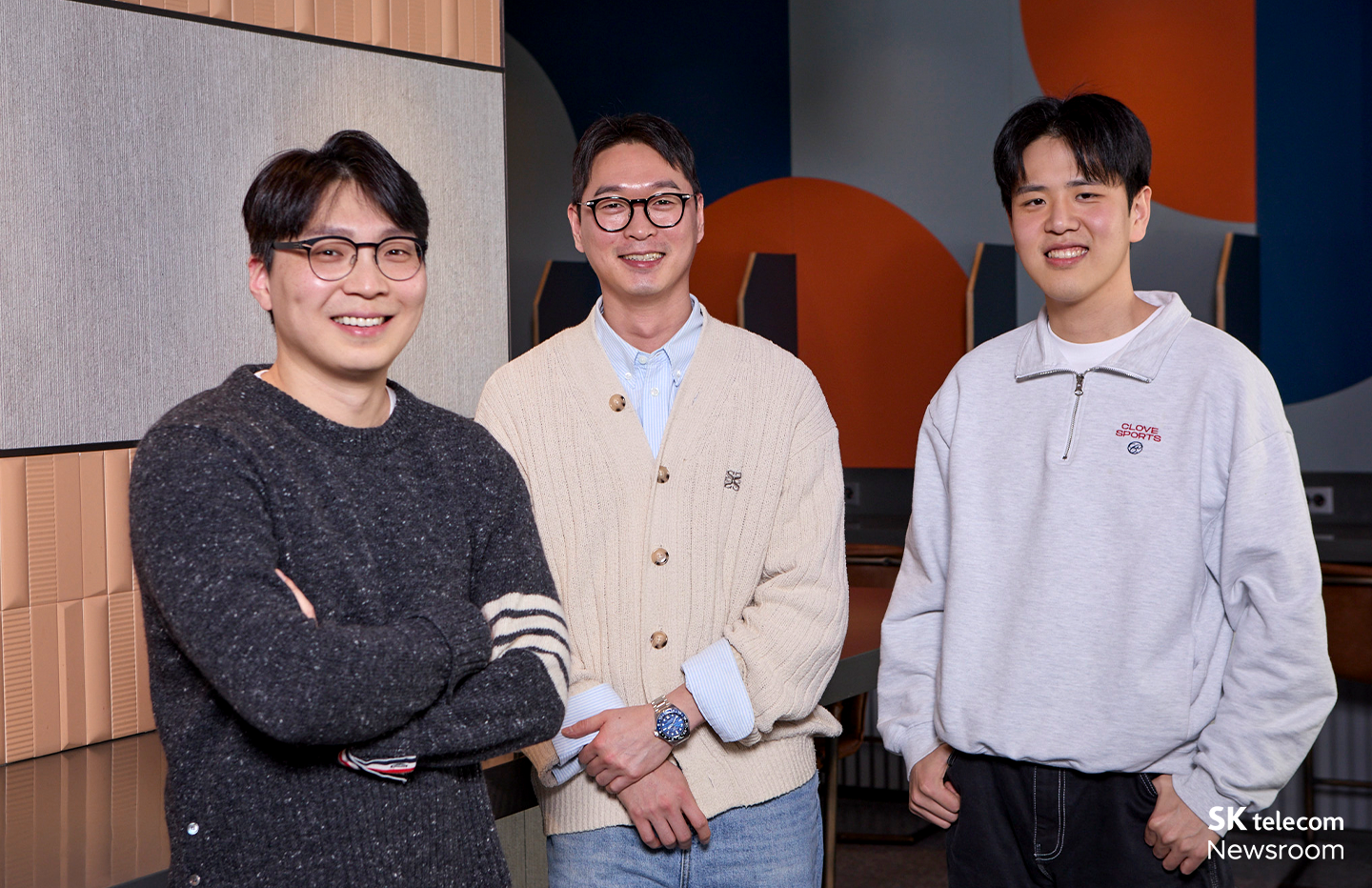

(From left) Yang Sang-hoon, Lee Jae-hyup, and Choi Ji-man of the GPUaaS Product Development Team

At MWC26, SK Telecom’s GPU cluster “Haein” won the Global Mobile Award (GLOMO) in the Best Cloud Solution category. SKT has now received this award for three consecutive years: “Cloud Radar” in 2024, “Petasus AI Cloud” in 2025, and “Haein” this year.

This year’s award is especially meaningful because it recognizes not an individual technology, but the completion of an entire AI infrastructure. SKT Newsroom spoke with Choi Ji-man, Yang Sang-hoon, and Lee Jae-hyup from the GPUaaS Product Development Team about the story behind the creation of SKT’s AI infrastructure, Haein.

Beyond technology to AI infrastructure: “Haein,” completed by bringing together multiple technologies

Q. What does the GLOMO award signify for SKT?

A. Choi Ji-man: Cloud Radar in 2024 and Petasus AI Cloud in 2025 were each recognized for their technical excellence as individual solutions. Haein, on the other hand, brings all those technologies together into one integrated service. GPU-centric AI infrastructure demands highly complex architecture and engineering capabilities.

What sets Haein apart is that we took SKT’s existing telecom assets — our network lines, data centers, operational know-how — and combined them with AI to build an infrastructure that actually works in the real world. It’s not just a technology; it’s a live, operational AI infrastructure service.

A. Yang Sang-hoon: The technologies that won previous awards are now actively utilized in the Haein cluster. The technical capabilities we have built over time did not remain isolated achievements. They accumulated year after year within a broader vision and ultimately came together as a fully realized service on top of a single integrated infrastructure called Haein.

It is also meaningful in that we established a Korean sovereign AI infrastructure by combining telco assets such as data centers and circuits. I believe our example can serve as a compelling reference case for how telecom operators can find new growth engines through AI.

GPUs as a service for AI development: A new infrastructure model – GPUaaS

To understand Haein, we have to begin with the concept of GPUaaS (GPU as a Service). As the AI industry has expanded at a rapid pace, the efficient use of GPU infrastructure has become a critical challenge, and GPUaaS has emerged as one of the key answers.

The rapid expansion of the AI sector has fueled a massive spike in GPU demand, triggering a widespread ‘GPU shortage’. In this environment, GPUaaS emerged as a cloud service model dedicated to providing specialized GPU infrastructure.

Lee Jae-hyup of the GPUaaS Product Development Team

Q. Could you explain what GPUaaS is?

A. Lee Jae-hyup: Simply put, you can think of GPUaaS as a ‘car rental service’ for GPUs. Just as you rent a car instead of buying one when you need a ride, GPUaaS allows users to access high-performance GPU servers, storage, networking, and other infrastructure required for AI development without having to build and own it themselves. They can use exactly the amount they need, for as long as they need it.

For example, enterprises or research institutions require large-scale GPU infrastructure to develop AI models. However, GPU servers are extremely expensive and time-consuming to deploy. . GPUaaS addresses this by offering the infrastructure as a service, enabling users to access the GPU resources they need for the necessary duration.

A. Yang Sang-hoon: There are typical service models such as IaaS, PaaS, and SaaS in cloud industry. Rather than building and managing servers on their own, customers use computing resources from cloud providers such as AWS or Microsoft Azure. GPUaaS can be understood as a cloud service model that focuses specifically on providing GPU computing power required for AI workloads.

Choi Ji-man of the GPUaaS Product Development Team

Q. What role does the GPUaaS Product Development Team play in Haein?

A. Choi Ji-man: Haein is Korea’s largest GPU infrastructure, built as a single cluster with more than 1,000 of NVIDIA’s next-generation AI GPUs, the B200. It is not simply a collection of GPU servers, but a fully integrated AI infrastructure encompassing high-speed networking, storage, and an operational platform.

In line with the national AI strategy, this infrastructure currently provides computing resources to entities participating in Korea’s Sovereign AI Foundation Model Project development initiative. Our team is responsible for the full spectrum of work required to bring it into commercial operation, including infrastructure planning, architecture design, cost optimization, deployment, and operations.

Going forward, we plan to leverage the operational expertise and data gained through Haein to continuously evolve our AI infrastructure business, staying aligned with expanding industry trends such as Physical AI.

Speed, Security, and Performance: The reality of building AI infrastructure

Because Haein was part of the national AI infrastructure project, the team had to consider multiple factors simultaneously, going well beyond the technical build itself. Within limited timelines and under a wide range of requirements, it took constant deliberation and dedicated effort to bring Haein to life.

Yang Sang-hoon of the GPUaaS Product Development Team

Q. What were the most important and most challenging aspects of the project?

A. Yang Sang-hoon: There were four main priorities in this project: speed, security, flexibility, and the balance between performance and cost.

First, Speed. Because we had to align with the project timeline led by the Ministry of Science and ICT of the Republic of Korea, we had only about two months for the actual deployment.

Some equipment had long lead times too, so it wasn’t easy. Despite these constraints, we couldn’t just build it fast; we had to ensure the infrastructure was finalized exactly according to our intended architecture and tech stack.

Second, Security. As a project in collaboration with the government, we had to meet strict national cloud information security standards. At the same time, we planned and took into account future expansion into private market, including the acquisition of all relevant security certifications.

Third, Flexibility. Since the cluster structures needed to be scalable enough to meet private market’s demands after the government project, finding the right balance between robust security and an expandable infrastructure was a critical challenge.

Lastly, the balance between performance and cost. We had to make highly detailed decisions across the board – from whether to use the previous-generation H200 GPUs or the latest B200s, to how to configure the network and storage – carefully determining where to maximize performance and where to control costs.

In the end, what allowed us to break through all these challenges was the people. Whenever problems arose, there were team members who would rush to the site to solve them proactively. We worked together day and night, putting our heads together to find solutions. Through that process, I was reminded once again that what ultimately completes a project is the people.

Q. What does Haein mean to you?

A. Lee Jae-hyup: To me, Haein signifies ‘Proof.’ As it was my first time handling this type of work, there were gaps to fill, but thanks to my teammates, I was able to play my part. It was proof of my own capability, and more importantly, proof that our team—who never lost their smiles even in the toughest situations—is truly ‘Tier 1.’

A. Yang Sang-hoon: To me, Haein is the ‘The second chapter of my career.’ I had worked in cloud and on-premise infrastructure for years, but GPU infrastructure is bit different from what I had learned before. Through Haein, I’ve officially stepped into the GPU and HPC market, and it became the starting point of a new phase in my career, one that will continue together with AI.

A. Choi Ji-man: To me, Haein feels like my ‘Pet.’ Simply seeing Haein makes me proud, and it’s something I’d proudly show anywhere. At the same time, it is also something that constantly needs care and attention. I see it as something precious that I will continue to manage and nurture with affection.

The GPU cluster Haein is more than a technological achievement, it has become an important foundation for SKT’s AI strategy. Behind the GLOMO award lies the accumulation of countless decisions, endeavors, and the dedication of the people who solved issues on the ground.

Built on this infrastructure, Haein will continue to serve as a foundation connecting even more AI technologies and services in the years ahead. The next journey for the GPUaaS Product Development Team has only just begun..