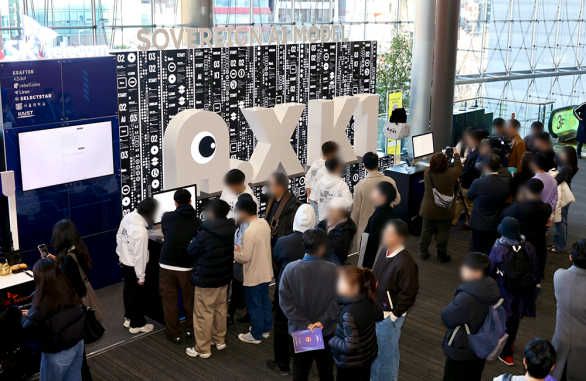

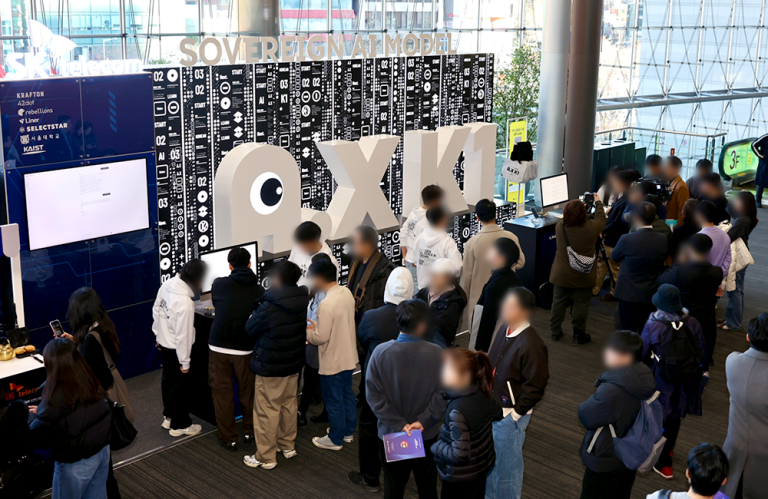

SKT is working closely with the MIT Generative AI Impact Consortium (MGAIC), a global industry–academia research consortium led by Massachusetts Institute of Technology, to drive AI innovation.

MGAIC serves as a cross-industry AI collaboration platform bringing together global leaders such as The Coca-Cola Company, OpenAI, and SKT. Through more than 60 active projects, the consortium is generating tangible advancements in AI across multiple industries.

Through MGAIC interview series, SKT Newsroom will introduce insights from MIT professors collaborating with SKT, sharing their research findings and perspectives on the evolving AI landscape.

As the first interview, we spoke with Professor Pattie Maes of the MIT Media Lab, who has been collaborating with SKT on foundational research into AI agent behavior. In this interview, she discusses what we must prepare today for a future where AI agents and humans coexist, and explains the global competitiveness of SKT’s sovereign AI strategy.

Professor Pattie Maes at MIT

The key factors for the successful adoption of AI agents are ‘capability’ and ‘trust’

Q1. In your paper ‘A Framework for Studying AI Agent Behavior’, you explored the behavioral characteristics of AI agents. Could you share what led you to this topic and highlight the key findings of your research?

For software agents to be successful, two challenges have to be addressed. Firstly, the challenge of “competence”, or making sure the agent has the knowledge and skills to be able to assist the user with tasks, and second, the issue of “trust” has to be resolved, that is, how do we make sure that the user can trust the agent to “do the right thing” and be “aligned” with how the user would do the task themselves?

It is key that we understand better how AI agents behave, how their behavior can be nudged or influenced and whether their behavior aligns with how people want tasks done. To advance this trust challenge, we have created a framework that allows us to make small changes in an agentic environment while keeping everything else constant. That way, we are able to study what factors influence the agent’s decision-making to ultimately build a better understanding of agent behavior.

Q2. Based on your findings, what strategic advantages do you see in companies developing and validating their own proprietary foundation models, such as SKT’s A.X K1?

The more we rely on AI models, the more important it is that we have a full understanding of those models, their capabilities, their strengths, their weaknesses, their biases and more. That starts with knowing what data a model has been trained on, but also includes knowing how the model has been finetuned, etc. Ideally models are also open, so that the network and weights can be inspected and a deeper understanding of the model’s behavior and how it can be tweaked can be developed.

A second important reason for companies to create their own models is to not be dependent on products and services that have been developed by companies that are based in very different countries and jurisdictions. Increasingly, AI is seen as being of political, economic and security interest. As a company operating in one country, I would not want to entirely rely on technology that is controlled by another country, especially when that technology is essentially a black box.

Last, it is important to have home grown models if you care about data privacy and security as it cannot be guaranteed 100% that private data (whether from an individual or a corporation) will not be leaked or collected.

Q3. As humans and AI agents increasingly coexist and make decisions together, what do you believe is the most urgent challenge that industry and academia must address collaboratively?

I believe the most urgent issue is the one that we focused on in this collaboration with SKT, namely, we need to understand the behavior of AI agents so we can more effectively predict what they will do in certain situations, what their limits are, when we can rely on them and when we cannot. Traditionally, humanity has always relied on technologies that we understand, can model and predict, but AI and agents are still mostly a black box that may surprise us, is unreliable and unpredictable, and hence makes us vulnerable. Never before have we had decision-makers that cannot be kept accountable, so we must act now to advance the art and science of understanding AI and agent behavior.

Q4. With the rapid growth in the number of AI agent service users, what advice would you most like to offer to users?

It is important for users to tread very carefully when using agents to avoid unpleasant surprises. Especially for tasks that are highly critical or sensitive in nature. It is also important to make sure that we do not lose certain skills ourselves by overly relying on AI systems to do things for us.

SKT’s move to build Sovereign AI expertise is a highly strategic choice

Q5. This research was conducted in collaboration with SKT as part of the MGAIC initiative. What is the significance of this collaboration, and how do you see it evolving in the future?

We really appreciate the collaboration with SKT. Their support is making it possible for us to advance the understanding of AI technologies, specifically, to advance the science of agent behavior. It is critical for the ultimate success of AI that we better understand and predict AI behavior.

Q6. SKT is making efforts on securing leadership in sovereign AI as well as advancing AI infrastructure and services, the MIT industry–academia collaboration can also be seen as part of such efforts. How do you evaluate SKT’s AI strategy and competitiveness?

I believe it is a very smart strategic choice on behalf of SKT to develop their own models and generally their own expertise in AI. As I mentioned earlier, given that AI is increasingly playing such a large role in individual, corporate and national interests, it is smart to not just adopt a black box solution built by someone else, even if that system may be more advanced in performance, but to develop home-built solutions that can be tweaked and that can be better understood.

Q7. As calls grow for sovereign AI optimized for non-English languages and local environments, what differentiators should sovereign AI pursue beyond general performance? In the global race for sovereign AI, what unique strengths does Korea have?

The current AI models are rapidly influencing culture wherever they get used. The information and examples they generate are very much biased toward the country where the model was developed. In order for South Korea to protect and support its unique culture, including arts, language, literature, unique points of view and ways of approaching things, it is important that you build Korean models trained on Korean data, and managed by Korean entities. South Korea has a long tradition of technological innovation. This means they are well positioned to do so.